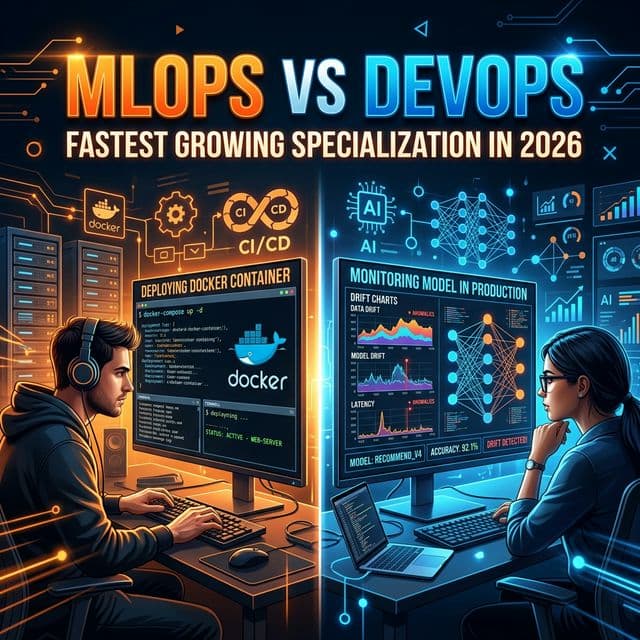

MLOps vs DevOps: The Fastest-Growing Specialization in Cloud Engineering — And How to Break In

In 2020, I'd never heard a client mention "MLOps." In 2026, every company building AI products is desperately looking for engineers who understand both ML systems and production infrastructure. That gap between supply and demand is your career opportunity.

WHAT IS MLOPS, REALLY?

MLOps is DevOps for machine learning systems. A traditional web application has one pipeline: code → test → deploy → monitor. An ML system has multiple interconnected pipelines: Data, Training, Validation, Serving, and Monitoring.

HOW MLOPS DIFFERS FROM DEVOPS

It's not just about deploying Docker images. MLOps involves versioning data and models, canary testing for model accuracy (not just latency), and monitoring for data drift and prediction degradation.

THE MLOPS TECH STACK IN 2026

- Experiment Tracking: MLflow, Weights & Biases

- Feature Stores: Feast, Tecton

- Pipeline Orchestration: Kubeflow Pipelines, ZenML

- Model Registry: BentoML, AWS SageMaker Endpoints

- Model Monitoring: Evidently AI, WhyLabs

THE 12-MONTH MLOPS TRANSITION PLAN

Start with basic deep learning (fast.ai), set up MLflow locally on sample data. Then move to Kubeflow Pipelines on Minikube. Finally, deploy a real ML model via CI/CD, adding drift monitoring. MLOps engineers with 2-4 years of experience are commanding a 40-60% salary premium over traditional DevOps.